Embedding Generation

Transform any text into an embedding vector using the embedding generation service to power search, retrieval, and semantic matching use cases.

How It Works

Embedding Generation converts text into high-dimensional vector representations that capture semantic meaning. These embeddings enable advanced search capabilities, similarity matching, and semantic understanding in your applications.

Getting Started

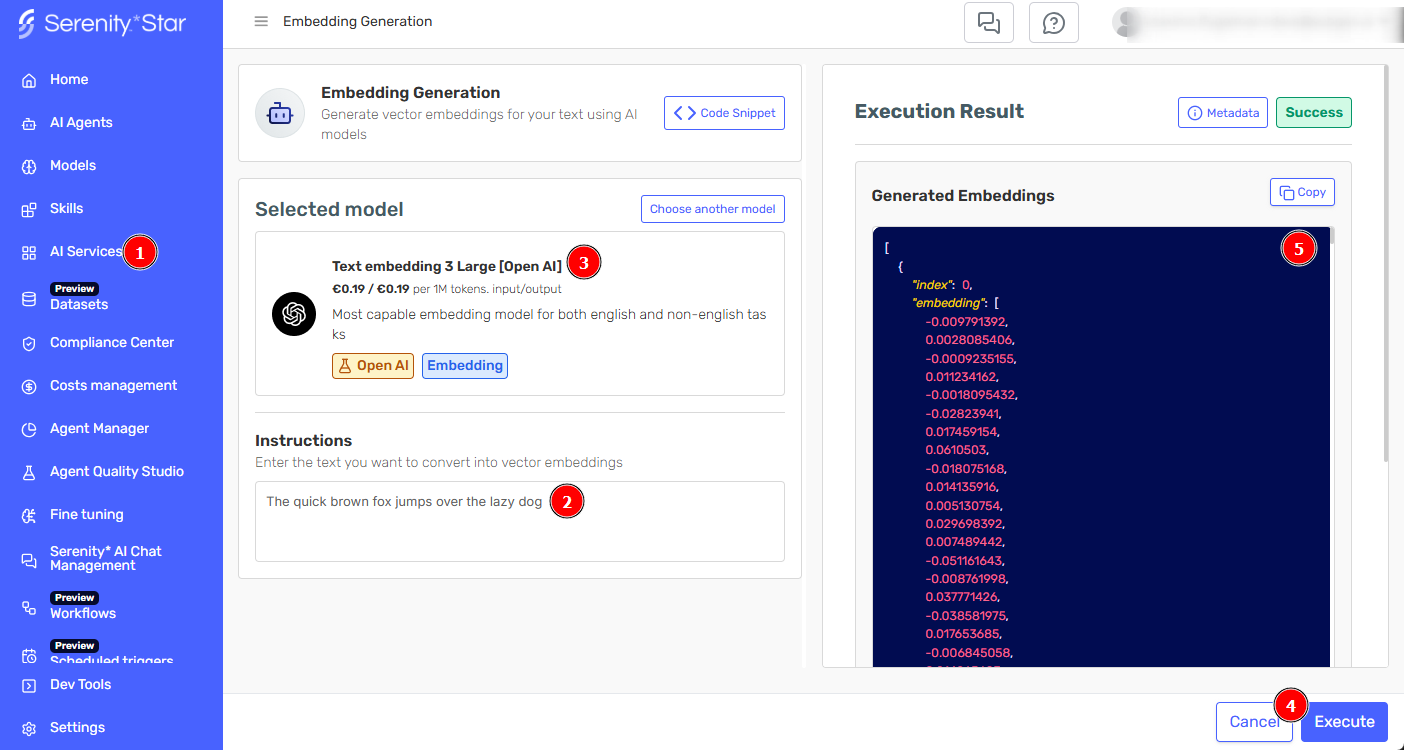

- Navigate to [AI Services > Embedding Generation]https://hub.serenitystar.ai/AIServices/embedding-generation() in Serenity* Star

- Enter or paste the text you want to convert to embeddings

- Select your preferred embedding model

- Click "Execute" to create the embedding vector

- Copy the resulting embeddings for use in your applications

What Are Embeddings?

Embeddings are numerical representations of text that capture semantic meaning. Similar concepts have similar embedding vectors, enabling:

- Semantic Search: Find content based on meaning, not just keywords

- Similarity Matching: Identify related documents or concepts

- Clustering: Group similar content automatically

- Recommendation Systems: Suggest relevant content to users

- Classification: Categorize text based on semantic features

Best Practices

To maximize embedding effectiveness:

- Chunk Size: Split large documents into meaningful chunks (100-500 words)

- Consistent Processing: Use the same model for indexing and querying

- Normalization: Normalize embeddings for cosine similarity comparison

API Access

Embedding Generation is available via API for:

- Bulk embedding generation

See the API documentation for detailed integration instructions.

For optimal search results, generate embeddings using the same model for both indexing your content and processing user queries. Mixing models can reduce accuracy.

Embeddings are particularly powerful when combined with agent knowledge bases, enabling semantic understanding of user questions and precise information retrieval.